Responsible AI is reshaping how organizations design, deploy, and govern intelligent systems. As technology headlines confirm, it blends ethics, risk management, and practical controls to keep innovation aligned with societal values. A strong emphasis on AI ethics helps teams detect bias, protect privacy, and promote fair outcomes. Leaders are balancing speed with AI governance, seeking processes that stakeholders can trust. By embracing responsible practices, companies can build trustworthy products while navigating evolving regulations.

In practical terms, responsible AI means systems that act consistently with stated principles and human-centered design. From an LS I perspective, this is ethical AI, governance-minded development, and transparent decision-making that support public confidence. Organizations implement governance frameworks, risk assessments, and explainability practices to show how decisions are made and iterated over time. A focus on data stewardship, privacy protections, and ongoing monitoring helps meet regulatory expectations while delivering real value to users.

Defining Responsible AI: Principles, Pillars, and Practical Governance

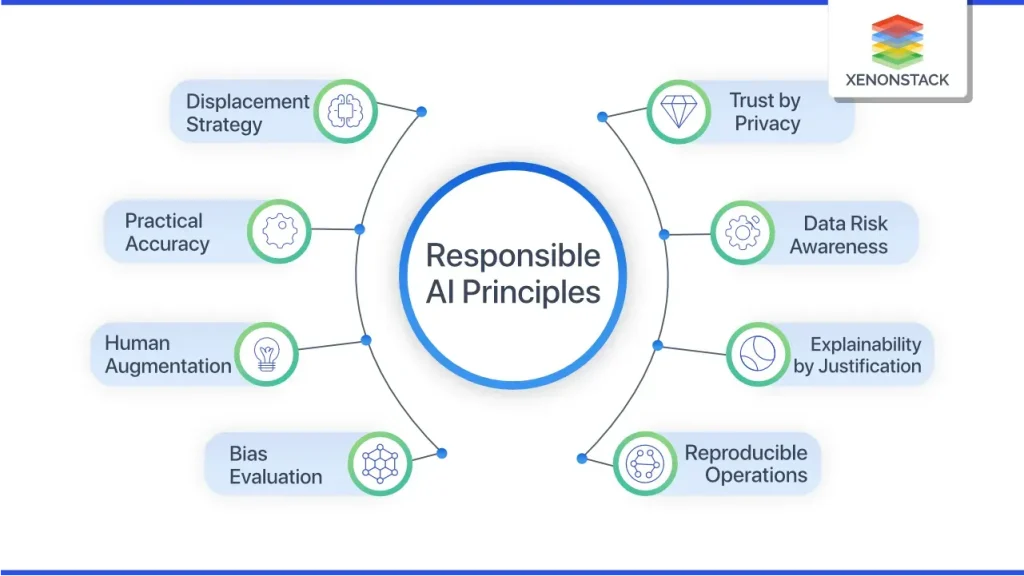

Responsible AI sits at the intersection of ethics, governance, and technology. It encompasses the core ideas of AI ethics—fairness, privacy, safety, and accountability—and translates them into practical governance and technical controls. It is not a single standard or checklist but an ongoing discipline that spans people, processes, and systems. In practice, five guiding pillars—fairness, transparency, accountability, privacy, and safety—help organizations assess risk, set expectations, and build products that users can trust.

To operationalize Responsible AI, organizations establish governance structures, lifecycle processes, and cross-functional collaboration that align product strategy with societal values. This includes defining ownership, implementing model lifecycle management, and integrating risk assessments early in development. By embedding governance, standardizing terminology, and creating clear metrics, teams can balance speed with accountability and begin turning ethical commitments into measurable outcomes.

The Intersection of AI Ethics and Real-World Impact

AI ethics addresses bias, discrimination, and the broader social consequences of automated decisions. A Responsible AI program starts with bias detection and mitigation across data collection, labeling, and model training, while also focusing on representativeness to ensure diverse populations and use cases are reflected. Beyond algorithmic behavior, ethics considers how explanations are presented to users and whether people retain meaningful oversight in high-stakes decisions.

Ethical design emphasizes inclusive thinking—datasets that minimize stereotype amplification, fair evaluation metrics, and transparent communication about model limitations. Practical tools such as model cards, impact assessments, and post-deployment monitoring help detect drift and unintended harms. In this way, ethics becomes a living part of development, guiding decisions around transparency, user control, and ongoing responsibility.

Navigating AI Regulation: Global Landscape and Compliance Playbooks

Regulation around AI is expanding globally as policymakers respond to rapid advances. AI regulation commonly adopts a risk-based approach, requiring organizations to assess, document, and mitigate potential harms. Across regions, this translates into data governance requirements, disclosures about model purposes, and clear accountability structures for AI-driven decisions.

The regulatory landscape varies by jurisdiction but tends to include elements such as transparency about when AI is used, governance and accountability for AI initiatives, risk assessments for high-risk applications, and robust data privacy and security practices. Organizations operating internationally should align with frameworks like EU high-risk AI rules, OECD AI Principles, and other regional guidelines, while maintaining product velocity through adaptable compliance programs that span multiple markets.

Building Trustworthy AI: Transparency, Accountability, and User-Centric Design

Trustworthy AI relies on transparent, meaningful explanations that resonate with diverse audiences—from data scientists to business leaders and customers. Techniques such as model cards, feature importance analyses, and local explanations help stakeholders understand why a decision was made. In high-stakes contexts, human-in-the-loop mechanisms ensure that humans can review or override automated decisions when necessary.

A user-centric approach to transparency includes communicating limitations, providing controls, and ensuring data handling respects privacy. Accountability structures—clear roles, governance processes, and ongoing monitoring—support fair outcomes. By combining explainability with strong privacy and security practices, organizations can cultivate trust and demonstrate responsible stewardship of AI systems.

AI Governance at Scale: Frameworks, Roles, and Data Management

Effective AI governance requires a structured framework that spans the organization. An AI steering committee, defined ownership for AI products, and a lifecycle process covering data collection, model development, deployment, monitoring, and decommissioning create a repeatable path to responsible outcomes. This governance layer aligns with corporate risk appetite, legal requirements, and ethical guidelines, while standardizing terminology, metrics, and reporting for all stakeholders.

Scaling governance also hinges on robust data and model management. Proactive data provenance, consent, privacy protections, and bias detection are essential, as are versioned models, lineage tracking, feature usage records, and rollback plans. A centralized dashboard view of AI assets and risks helps teams maintain accountability as the complexity of AI systems grows.

From Theory to Practice: Implementing Responsible AI in Product Development

To bring Responsible AI from concept to product, start with a clear policy that articulates ethics, governance, and accountability expectations. Build a cross-functional AI governance body, create inventories of data and models, and implement continuous monitoring to detect drift, fairness issues, and privacy concerns in production. Develop explainability capabilities tailored to different audiences and establish remediation paths when explanations or outcomes are insufficient.

Practical steps include ongoing training on bias, privacy, and regulatory considerations; regular independent audits; and transparent disclosures about when and how AI is used. Align adoption with recognized frameworks (NIST RMF, OECD AI Principles, ISO/IEC standards) while remaining adaptable to domain-specific requirements. Through governance, transparency, and data protection, organizations can deliver innovative AI products that are trustworthy, compliant, and aligned with user rights.

Frequently Asked Questions

What is Responsible AI, and how do AI ethics and AI governance shape its practice?

Responsible AI means designing, building, and deploying AI that respects human values and minimizes harm. It rests on AI ethics and AI governance to establish policies, ownership, and controls across fairness, transparency, accountability, privacy, and safety. Practically, teams use model cards, impact assessments, data provenance, and continuous monitoring to make decisions explainable and auditable, supporting trustworthy AI. It’s an ongoing discipline that balances innovation with risk management.

How does AI regulation influence Responsible AI and trustworthy AI deployment?

AI regulation provides guardrails for Responsible AI by defining risk-based requirements, data handling, and accountability structures. It shapes product design through disclosures, governance roles, and impact assessments, guiding trustworthy AI deployment. Firms align with frameworks such as EU high-risk rules and OECD principles while embedding compliant processes early to preserve velocity. Ongoing regulatory insight helps sustain responsible innovation.

What role does algorithm transparency play in building trustworthy AI?

Algorithm transparency helps users and stakeholders understand how decisions are made, a cornerstone of trustworthy AI. By exposing inputs, model logic, and limitations through tools like model cards and explanations, organizations improve accountability and user confidence. Transparency should be audience-appropriate and paired with human-in-the-loop controls where risk is high. This supports responsible AI without exposing sensitive system details.

What are practical steps to implement AI governance for Responsible AI across the product lifecycle?

Practical steps start with a formal AI governance framework: assign ownership, create an AI steering committee, and document end-to-end lifecycle processes. Build data and model inventories, require bias and fairness testing, and implement monitoring for drift and privacy issues. Establish clear disclosures, audit trails, and cross-functional reporting to support accountability and regulatory alignment, while preserving product velocity.

How can organizations address bias and fairness within Responsible AI, and what role does AI ethics play?

AI ethics guides fairness and bias mitigation in Responsible AI. Begin with representative data, bias testing, and fairness metrics across groups, then apply debiasing techniques and calibration checks. Conduct independent audits and post-deployment monitoring to identify disparate impact and correct it. Transparent disclosures and accountability mechanisms build trust and support trustworthy AI outcomes.

How can organizations balance speed and accountability in Responsible AI under evolving AI regulation and governance?

Balance speed and accountability by embedding governance into product teams from the start. Define policies, automate compliance checks, and maintain versioned models with rollback plans. Use continuous monitoring for drift, safety, and privacy, and provide explainability for stakeholders. Align with evolving AI regulation and governance while enabling modular, auditable architectures that sustain innovation.

| Topic | Key Points |

|---|---|

| What is Responsible AI? | – Designs/deploys AI to align with societal values, protect rights, and minimize harm.n- Five guiding pillars: fairness, transparency, accountability, privacy, and safety.n- Ongoing process spanning people, processes, and technology. |

| AI Ethics | – Bias detection/mitigation during data collection, labeling, and training.n- Representativeness of training data for diverse populations.n- Clear user explanations and meaningful, actionable insights for non-technical stakeholders.n- Human oversight when needed; use of model cards, impact assessments, and post-deployment monitoring. |

| Regulation and Governance | – Global, risk-based regulation focusing on assessing and mitigating harms.n- Data governance, model purpose documentation, and clear accountability structures.n- Common elements: transparency, governance/accountability, risk assessment, privacy/security.n- Frameworks: EU high-risk AI emphasis, OECD AI Principles; align globally for multi-regulatory expectations while maintaining speed. |

| Best Practices | – Establish robust AI governance with cross-functional ownership and lifecycle processes.n- Risk-based data/model management (provenance, consent, privacy, bias testing, versioning).n- Enhance transparency and explainability using model cards, feature importance, and local explanations; human-in-the-loop where appropriate.n- Prioritize privacy, security, and data protection throughout the lifecycle.n- Foster fairness and bias mitigation with audits, diverse testing, and monitoring.n- Build a culture of accountability and continuous learning. |

| Key components and frameworks | – Frameworks/standards guide practice: NIST AI RMF; ISO/IEC; IEEE.n- Adopt a layered approach combining governance, controls, and ongoing evaluation to stay aligned with evolving expectations. |

| Case studies: practical applications | 1) Healthcare: governance, explainability, privacy assessments; model cards and human-in-the-loop for high-stakes diagnoses. 2) Finance: bias mitigation, model risk management with versioning and monitoring; transparent disclosures and data protection. 3) Public sector: transparency, oversight, user disclosures; auditable logs/dashboards for independent evaluation. |

| Challenges | – Balancing speed and safety; integrate risk checks early and use iterative governance.n- Managing complexity of data pipelines/models/external sources with modular, auditable architectures.n- Aligning diverse stakeholders; transparent metrics and inclusive governance.n- Keeping pace with regulation; monitor developments and adapt processes without stalling delivery. |

| Practical steps to begin/advance | – Define a clear Responsible AI policy covering ethics, governance, accountability.n- Create an AI governance body with cross-functional representation and ownership.n- Build data/model inventories for provenance and risk tracking.n- Implement continuous monitoring for drift, fairness, and privacy.n- Develop explainability capabilities for different audiences and remediation paths.n- Invest in training on bias, privacy, security, and regulatory considerations.n- Conduct independent audits and publish accessible disclosures about AI use.n- Align with frameworks (NIST RMF, OECD AI Principles, ISO/IEC) and tailor to your domain. |

| The evolving future of Responsible AI | – Responsible AI will stay central as AI capabilities expand. Regulators, customers, and investors demand accountability and governance. Organizations embedding ethics, regulation, and best practices will build trust, reliability, and long-term value. The shift moves from “can we build this?” to “should we build this, and how can we do it responsibly?” emphasizing governance, transparency, and continuous improvement. |

Summary

Conclusion

Responsible AI is not a one-time checklist but a continuous discipline that integrates ethics, regulation, and best practices into every stage of the AI lifecycle. By prioritizing AI ethics, adhering to evolving AI regulation, and implementing practical governance and technical controls, organizations can deliver transformative technology that remains safe, fair, and accountable. The path to trustworthy AI requires commitment, collaboration, and a culture that values responsible innovation as much as competitive advantage. Embracing governance, transparency, and data protection will help you build AI systems that users trust and regulators approve—paving the way for sustainable, responsible growth in a world increasingly shaped by AI.